Novel AI method sharpens 3D x-ray vision

February 20, 2026 Source: ASM International

X-ray tomography is a powerful tool that enables scientists and engineers to peer inside of objects in 3D, including computer chips and advanced battery materials, without performing anything invasive. It’s the same basic method behind medical CT scans. Scientists or technicians capture x-ray images as an object is rotated, and then advanced software mathematically reconstructs the object’s 3D internal structure. But imaging fine details on the nanoscale, like features on a microchip, requires a much higher spatial resolution than a typical medical CT scan — about 10,000 times higher.

The Hard X-ray Nanoprobe (HXN) beamline at the National Synchrotron Light Source II (NSLS-II), a U.S. Department of Energy (DOE) Office of Science user facility at DOE’s Brookhaven National Laboratory, is able to achieve that kind of resolution with x-rays that are more than a billion times brighter than traditional CT scans.

Tomography only works well when these projection images can be taken from all angles. In many real-world cases, however, that’s impossible. For example, scientists can’t spin a flat computer chip around 180 degrees without blocking some of the x-rays. When parallel to the surface at high angles, fewer x-rays can penetrate the chip, limiting the viewing angles of the measurement. The missing data from this angular range produces a “blind spot,” leading the reconstruction software to produce blurry, distorted images.

“We call this the ‘missing wedge’ problem,” said Hanfei Yan, lead beamline scientist at the HXN beamline and corresponding author of this work. “For decades, this problem has limited the applications of x-ray and electron tomography in many areas of science and technology.”

To solve the problem, researchers at NSLS-II have developed a new method called the perception fused iterative tomography reconstruction engine (PFITRE). This novel approach combines the physics of x-rays with the power of artificial intelligence (AI).

The team trained a convolutional neural network, a type of AI model that automatically learns data patterns, with simulated data. Convolutional neural networks use convolutional layers to detect important features, such as edges, textures, or shapes, and combine these features to make predictions, like identifying what’s in an image. The AI component captures perceptual knowledge about the sample, what the team expects the solution should look like, and uses it to improve the reconstructed image based on that knowledge.

Meanwhile, the physics-based model checks that the results still make sense scientifically. This process repeats several times until results from the AI and physics components converge, producing a reconstruction that is both accurate and visually clear. Their results were recently published in npj Computational Materials.

Unlike image correction in consumer tech, like cell phone cameras, scientific imaging must preserve accuracy, not just appearance. Scientists needed to devise a method to ensure that the corrected image is still consistent with the physical model and data. To do this, NSLS-II scientists embedded the AI into an iterative solving engine, a mathematical tool that tackles complex problems by repeatedly trying improved solutions, step by step, until it gets close enough to the right answer. The embedded AI acts as a “smart” regularizer, a function that limits overcorrection, leveraging physics-based modeling to ensure that the reconstructions stay faithful to the actual x-ray measurements.

“We didn’t want an AI that just makes better images. We wanted an AI that works hand-in-hand with physics, so that the results are both visually clear and scientifically trustworthy,” said Chonghang Zhao, a postdoc at HXN and lead author of this work. “That’s the power of our method — combining the sophistication of AI with the physical model to ensure fidelity.”

The AI in PFITRE is built on a type of neural network called a U-net architecture, an encoder-decoder design that is popular for general image processing. The encoder stage learns and detects essential features, such as the edges, textures, and shapes of an input image, and the decoder stage rebuilds the image using those features to restore details and correct distortions. The researchers enhanced the ability of U-net with structural modifications called residual dense blocks and dilated convolutions. These help the network capture information across multiple scales from fine textures to larger structures, making the network more suitable for handling the missing wedge problem in tomography. A model like this can’t learn on its own, though. It needs significant amounts of data to train on.

Real scientific microscopy datasets are too limited for effective training in a specific AI model like PFITRE, so the team relied on synthetic data. They generated training datasets by using natural images, simulated patterns, and scanning electron microscope images of circuits as samples being imaged. To make the training as realistic as possible, they introduced a “digital twin” of the experiment and created virtual data that mimics real-world conditions. They intentionally included noise, misalignment, and other imperfections so the AI could handle physical data.

While there is still work to be done to perfect this method, the benefits are clear. Samples that were once inaccessible due to their size or geometry can now produce informative data. A larger field of view allows more of a sample to be analyzed without falling victim to the missing wedge. This method could also prove beneficial in experiments where fewer measurements are required, enabling faster in-situ studies, and reducing radiation dose in sensitive samples.

“This opens the door to detailed imaging of samples that couldn’t be studied before. That’s a very big step forward,” Yan said. “Whether it’s diagnosing defects in microchips or understanding why a battery degrades, PFITRE allows us to see details under conditions that were previously considered infeasible.”

*************************************************************************

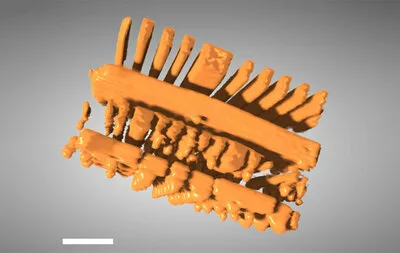

Image – This 3D image of an integrated circuit showing slices through its thickness was reconstructed with a new technique that incorporates artificial intelligence called the “perception fused iterative tomography reconstruction engine.”

For more information:

Brookhaven National Laboratory

https://www.bnl.gov/

Subject Classifications

Batteries and Energy Storage

Electronics

Electronics and Microelectronics

Industries and Applications

materials characterization

Nanotechnology

news

News Articles